Configure a universal forwarder to monitor a log file on Linux

In this article we will see how to configure Splunk forwarder to monitor a log file and ingest the log file events to Splunk. In this example we will perform the steps to monitor a file on the same server where Splunk enterprise is installed.

- Login to the Linux server using PuTTY on which Splunk is installed.

- If not already set, then set SPLUNK_HOME variable to the directory where Splunk is installed.

- export SPLUNK_HOME=/opt/splunk

- Go to the directory $SPLUNK_HOME/etc/system/local

- cd $SPLUNK_HOME/etc/system/local

- Modify the file inputs.conf and append the below lines at the end of the file.

[monitor:///var/log/messages_copy]

index=main

sourcetype=server_log

- Value of monitor tells the file(s) that you want Splunk to monitor for the events. You can specify a single or multiple files using wild cards. In this example I am using a copy of system file /var/log/messages. I will later add some events in this finally and we will see how Splunk reads these events.

- Value of index parameter tells the name of the index in which the information of the events will be stored.

- Sourcetype is a classification of data input, i.e, type of data input being provided.

- Save the file inputs.conf

- Restart Splunk

- #cd $SPLUNK_HOME/bin

- #./splunk stop

- #./splunk start

Verify that Splunk is Reading events from the file and the events are visible on Splunk console using below query:

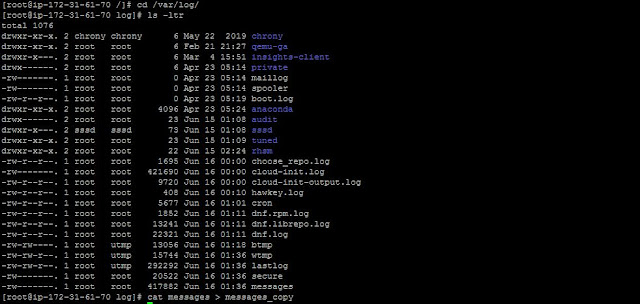

- First I did a test for searching the events, but no events were found as the file /var/log/messages_copy was not found. See the screenshot below:

- Now check the same query to again and you should be able to see the events for the log file /var/log/messages_copy.

Comments

Post a Comment